PyTorch 2026: The Foundation for Multimodal AI and Agentic Systems

PyTorch 2026: The Foundation for Multimodal AI and Agentic Systems

Remember when AI was just a glorified autocomplete that could barely write a coherent paragraph? Yeah, those days are dead. Welcome to 2026, where PyTorch isn't just a deep learning framework—it's becoming the backbone of autonomous agents that can see, hear, reason, and probably plot world domination while you're sleeping.

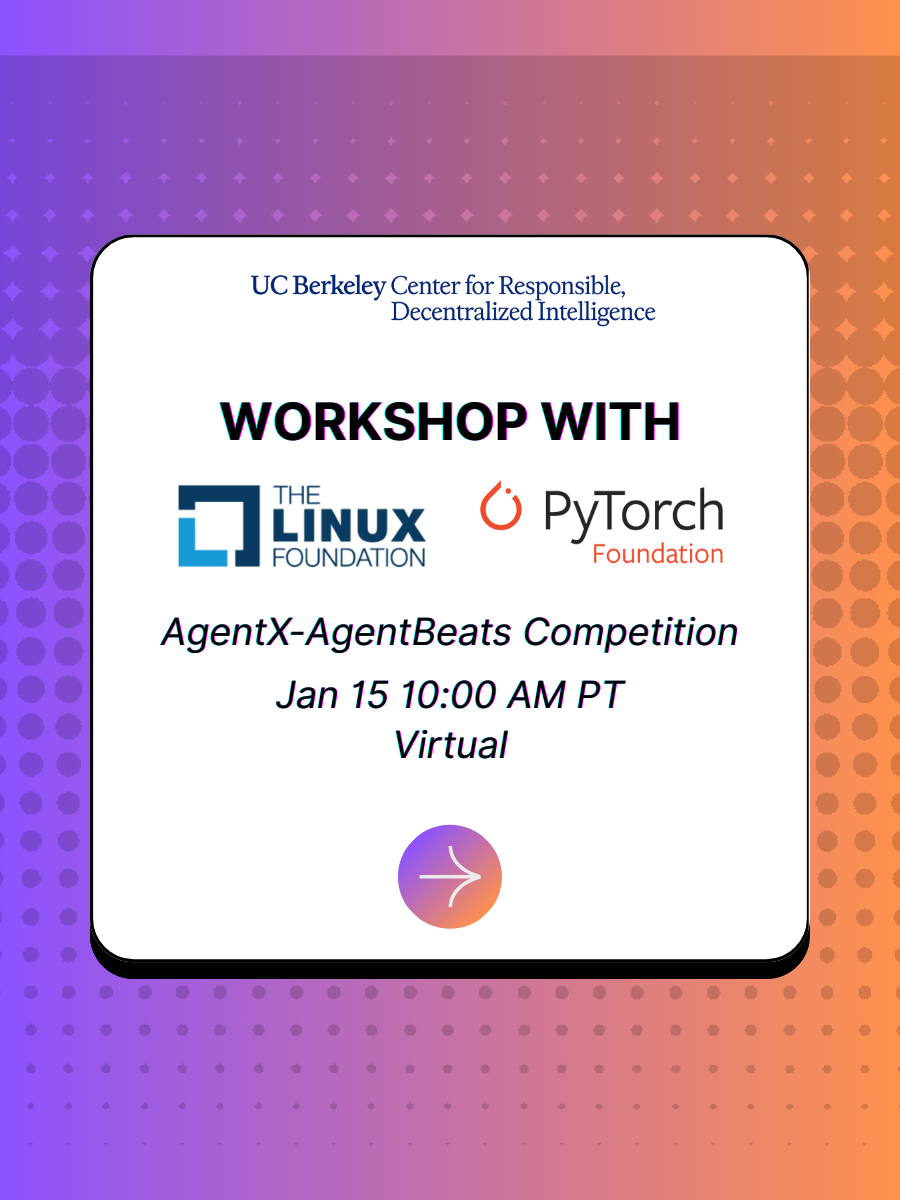

Image: AgentX-AgentBeats Competition banner showcasing PyTorch Foundation's role in advancing agentic AI evaluation. Source: Berkeley RDI

The AgentX-AgentBeats Revolution

On February 18, 2026, the PyTorch Foundation is hosting a workshop that's about as close to a coming-out party for production-grade agentic AI as you're going to get. The AgentX-AgentBeats competition isn't your run-of-the-mill hackathon—it's a $1M+ prize pool throwdown that's attracting 1,300+ teams globally. The premise? Create benchmarks for AI agents (Phase 1), then build agents that crush those benchmarks (Phase 2). It's like a meta-competition where the evaluation itself gets gamified.

Image: Berkeley RDI's Agentic AI Weekly highlighting the workshop series. Source: Substack/Berkeley RDI

Why PyTorch? Why Now?

Here's the thing: multimodal AI in 2026 isn't just about processing text, images, and audio simultaneously. McKinsey's 2025 report shows 65% of enterprises are already testing or deploying multimodal AI solutions, and the market's exploding from $12.5B in 2024 to a projected $65B by 2030. That's a 420% growth curve that would make even the most aggressive crypto bro blush.

PyTorch's positioning here is strategic. The framework's modularity, dynamic computation graphs, and now-native support for transformer architectures across modalities make it the natural home for systems that need to:

- Fuse vision, language, and audio in real-time

- Maintain state across multi-hour workflows

- Coordinate multiple specialized agents (hello, multi-agent systems)

- Scale from edge devices to data centers without architectural rewrites

The Agentic Shift: From "Brain in a Jar" to Embodied Intelligence

Digital ASEAN's recent masterclass called it perfectly: we spent two years treating AI like a "brain in a jar"—brilliant but blind. 2026 changes that. We're giving AI eyes, ears, and hands, and PyTorch is providing the nervous system stitching it all together.

Vision encoders transform images into token streams that feed directly into transformers alongside text prompts. Audio processing isn't a separate pipeline anymore—it's just another modality tokenized and fed into the same attention mechanisms. This native multimodality is what lets a customer support agent look at a screenshot of a broken product, read the user's complaint text, and hear the frustration in their voice—all simultaneously.

Image: Workshop announcement for PyTorch Foundation's agentic AI event. Source: Luma/Berkeley RDI

Production-Ready or Just Production-Hype?

Here's where I channel my inner skeptic: we've been promised "production-ready AI" since 2022. But PyTorch's focus on OpenEnv—open-source reinforcement learning environments—suggests they're serious about the infrastructure gap, not just model performance. The workshop includes deep dives into torchtitan, torchforge, and streamlined Hugging Face submissions. That's not flashy demo-ware; that's the plumbing that makes AI agents actually deployable.

The real test? Whether the 1300+ AgentBeats teams can build benchmarks that don't just measure capabilities but also safety, robustness, and reproducibility. As one commenter noted on Berkeley RDI's coverage, judging criteria around safety often lag behind capability metrics. If PyTorch can close that gap, we're talking about something actually transformative, not just technically impressive.

The Enterprise Reality Check

Gartner projects 40% of enterprise applications will embed AI agents by mid-2026, up from less than 5% in early 2025. That's an eight-fold surge in demand. But here's the kicker: Celonis surveyed 1,649 leaders and found 76% admit their business processes aren't ready for AI agents. The gap? Infrastructure, process clarity, and governance—not model capabilities.

This is where PyTorch's partnership with the Linux Foundation becomes interesting. It's not just about code anymore; it's about building the standards, protocols, and tooling that let enterprises actually deploy these systems without creating compliance nightmares or security black holes.

The Bottom Line

PyTorch 2026 isn't trying to be the flashiest framework on the block. It's positioning itself as the boring-but-essential infrastructure layer for the agentic AI explosion. The AgentX-AgentBeats workshop on February 18th isn't just a competition finale—it's a signal that PyTorch is betting the next decade on autonomous, multimodal systems.

Will it work? Ask me in March after Phase 2 submissions roll in. But for now, watching PyTorch transition from research darling to agentic infrastructure backbone is like watching your favorite indie band finally sign the deal that puts them in every stadium. Hope they don't lose the edge that made them great in the first place.

Sources

- Berkeley RDI - AgentX-AgentBeats Competition: https://rdi.berkeley.edu/agentx-agentbeats.html

- PyTorch Event Page: https://pytorch.org/event/agentx-agentbeats-competition-agentic-rl-and-environments-workshop/

- Berkeley RDI Agentic AI Weekly (Feb 4, 2026): https://berkeleyrdi.substack.com/p/agentic-ai-weekly-berkeley-rdi-february

- Kanerika - Multimodal AI Agents Architecture: https://kanerika.com/blogs/multimodal-ai-agents/

- FutureAGI - Multimodal AI Trends 2025: https://futureagi.com/blogs/multimodal-ai-2025

- Digital ASEAN - Multi-Modal Autonomous Agents Masterclass: https://www.youtube.com/watch?v=oLcK1rpPMCo

- YouTube - Orchestrate or Obsolete: Building 2026 AI Agent Squads: https://www.youtube.com/watch?v=9v3bDY4TCGc

Comments ()